TikTok adding new features to stop fake news and misinformation spreading

Unsplash: Solen Feyissa

Unsplash: Solen FeyissaTikTok is a hub of entertaining content from all sorts of well-known names.

TikTok is tackling the scourge of mis- and disinformation by launching a new feature that highlights the uncertainty of unverified content, aiming to slow its spread across the app.

From today in the US and Canada, and February 22 in the UK, any video that TikTok’s content moderators or fact checkers have tried to check but cannot immediately verify will have a banner appended to it saying that the video may contain unsubstantiated content.

If a viewer then tries to share that video, a further prompt will appear reminding them that the video contains content that couldn’t be verified, and asking them if they really want to share the video anyway.

In trials conducted by the platform, the combination of the banner questioning the content and the prompt reminding viewers resulted in a 24% decrease in sharing of videos with potentially false content.

Pixabay

Pixabay“People come to TikTok to be creative, find community, and have fun,” said Gina Hernandez, product manager in TikTok’s trust and safety team, in a blog post announcing the feature. “Being authentic is valued by our community, and we take the responsibility of helping counter inauthentic, misleading, or false content to heart.”

The banner and prompt will be particularly useful in the case of breaking news events, where it’s often difficult to immediately substantiate whether information being shared is true or false. By disincentivizing engagement, TikTok hopes to slow the spread of fake news around live news events.

“We’ve designed this feature to help our users be mindful about what they share,” said Hernandez.

Pixabay

PixabayThe feature is being introduced to accompany, not replace, TikTok’s current policies on misinformation. In the first half of 2020, TikTok removed around 1.25 million videos worldwide for issues of “integrity and authenticity” – around 1.2% of all the videos they removed during that six months, and around 6,930 a day.

Those videos would still be removed for being false, Dexerto understands – but on more borderline cases, where it’s difficult to ascertain objective fact in a situation, the new features could help reduce the speed of spread on the app.

The feature was developed by TikTok in conjunction with behavioural science experts Irrational Labs, and as well as reducing the shareability of content, it also decreased the number of likes questionable videos received by 7% in trials.

“Labelling of content has been previously used by other tech-firms to combat misinformation,” said Yevgeniy Golovchenko, who studies disinformation at the University of Copenhagen. “Existing research from other platforms suggests that labels may indeed help curb the labelled stories.”

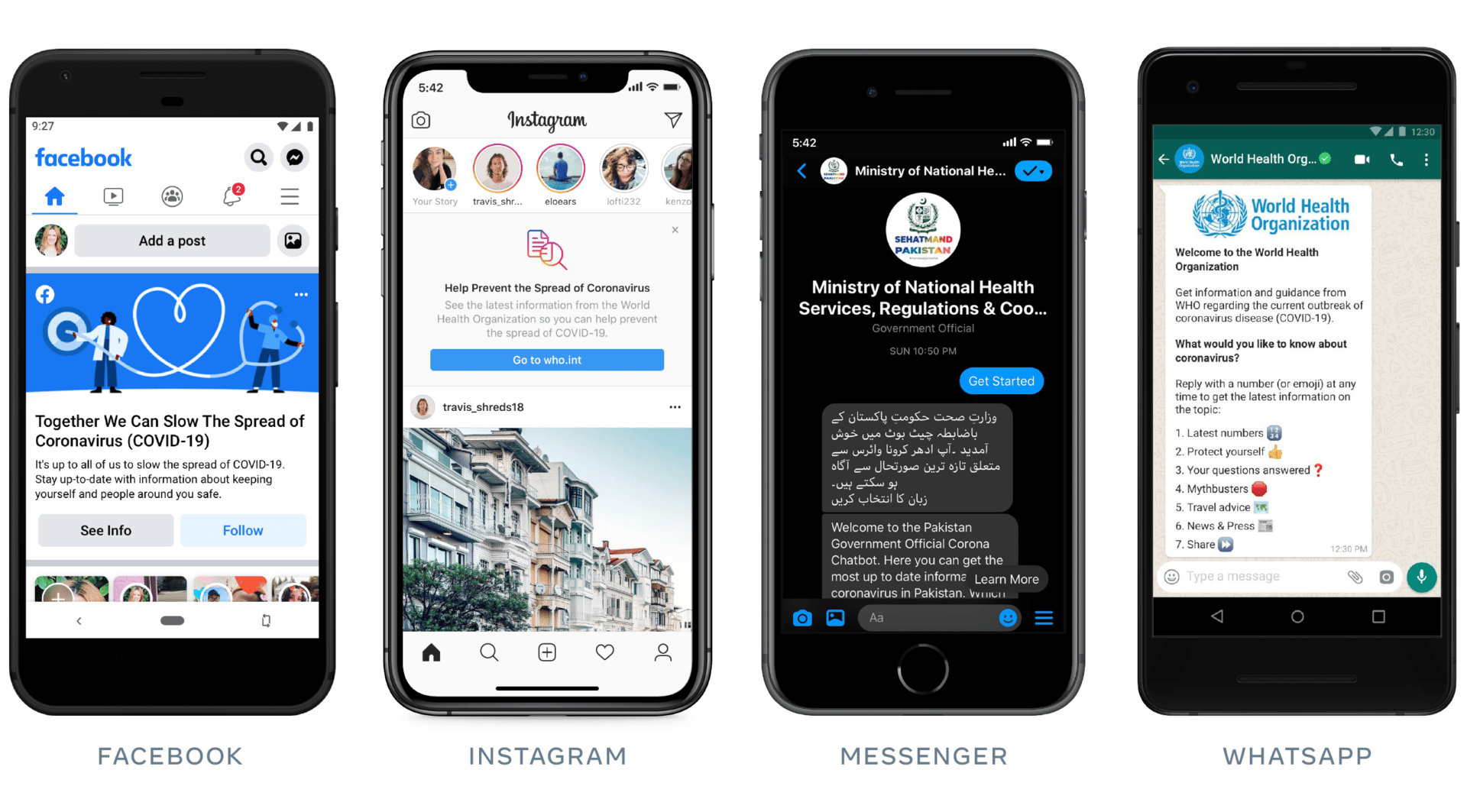

The academic points out that a similar method has been previously deployed by Facebook on some of its content.

Facebook

FacebookGolovchenko does, however, sound a note of caution about the feature. “There is also research which points towards potential dangers of using this technique,” he said. “By labelling some content, a social media platform can potentially make other non-labelled content – both false and true – appear more reliable.”

Research by the Massachusetts Institute of Technology showed the so-called “implied truth effect” was a risk.

“When it comes such policies, regardless of whether they are implemented by TikTok, Instagram or other platforms, it is super important that the tech firms are transparent,” he added. “This should be done by providing researchers and journalists with accessible data on the labelling: What is labelled, when and why.”

Some of those concerns may be headed off by the scale of TikTok’s moderation and fact-checking team, which is also being beefed up through a new partnership with Logically, one of the world’s biggest dedicated fact-checking organisations. They are “supporting our efforts to determine whether content shared on the platform is false, misleading or misinformation,” said Hernandez, who added: “If fact checks confirm content to be false, we’ll remove the video from our platform.”